Embryo grading from unreliable labels by positive-unlabeled classification with ranking

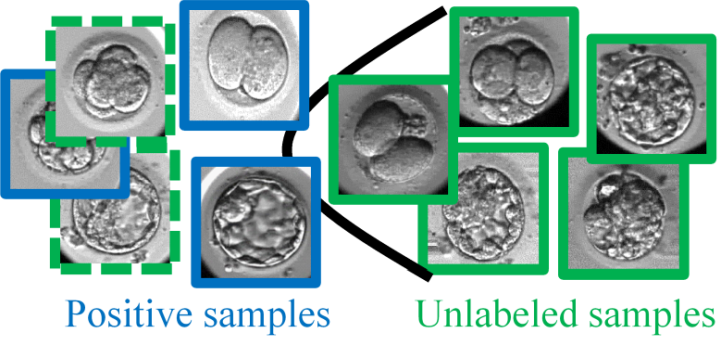

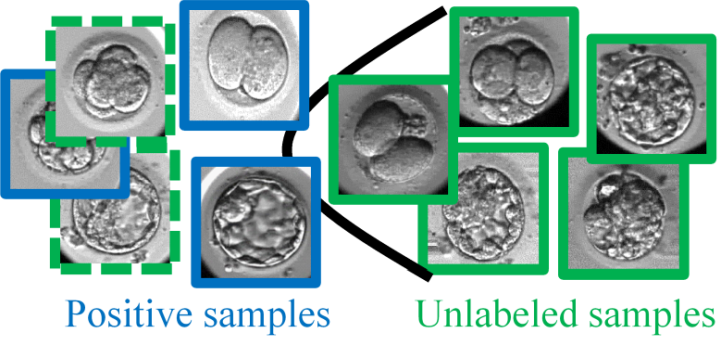

We propose a method for human embryo grading with its images. This grading has been achieved by positive-negative classification (i.e., live birth or non-live birth). However, negative (non-live birth) labels collected in clinical practice are unreliable because the visual features of negative images are equal to those of positive (live birth) images if these non-live birth embryos have chromosome abnormalities. For alleviating an adverse effect of these unreliable labels, our method employs Positive-Unlabeled (PU) learning so that live birth and non-live birth are labeled as positive and unlabeled, respectively, where unlabeled samples contain both positive and negative samples. In our method, this PU learning on a deep CNN is improved by a learning-to-rank scheme. While the original learning-to-rank scheme is designed for positive-negative learning, it is extended to PU learning. Furthermore, overfitting in this PU learning is alleviated by regularization with mutual information. Experimental results with 643 time-lapse image sequences demonstrate the effectiveness of our framework in terms of the recognition accuracy and the interpretability. In quantitative comparison, the full version of our proposed method outperforms positive-negative classification in recall and F-measure by a wide margin (0.22 vs. 0.69 in recall and 0.27 vs. 0.42 in F-measure). In qualitative evaluation, visual attentions estimated by our method are interpretable in comparison with morphological assessments in clinical practice.